Hardware Rooted Sovereignty Workshop

Verifiable Safe & Trusted AI Infrastructure for the Global South

Date: 16 February 2026, 11:25 - 12:30 am

Location: Bharat Mandapam Meeting Room No. 17, New Delhi

-

The Secure AI Futures Lab (SAFL) is a dedicated hub for convening research dialogue and accelerating expertise for the safe and beneficial development and deployment of advanced AI. SAFL will have iterations across tech hubs in India and South Asia, including New Delhi, Bengaluru, Chennai, Hyderabad, Mumbai, Singapore, etc.

-

The India–AI Impact Summit 2026 marks a defining global inflection point — transitioning from dialogue to demonstrable impact. Anchored in the principles of People, Planet, and Progress, it envisions a future where AI advances humanity, fosters inclusive growth, and safeguards our shared planet.

For more Info: https://impact.indiaai.gov.in/ -

Organised by Impact Academy & Lucid Computing

Held at the India AI Impact Summit 2026, this session brought together government officials, security researchers, and Global South policy experts to explore hardware-level verification as a practical path to closing the AI trust gap, making data-protection commitments not just promised, but provable.

Panel Members

S. Krishnan

Secretary, Ministry of Electronics & IT, Government of India

Renata Dawn

Director of Tech Diplomacy, Simon Institute for Longterm Governance

Eileen Donahoe

Human Rights Activist & former Unites States Ambassador to UNHRC

Our Speakers

Gaia Marcus

Director, Ada Lovelace Institute

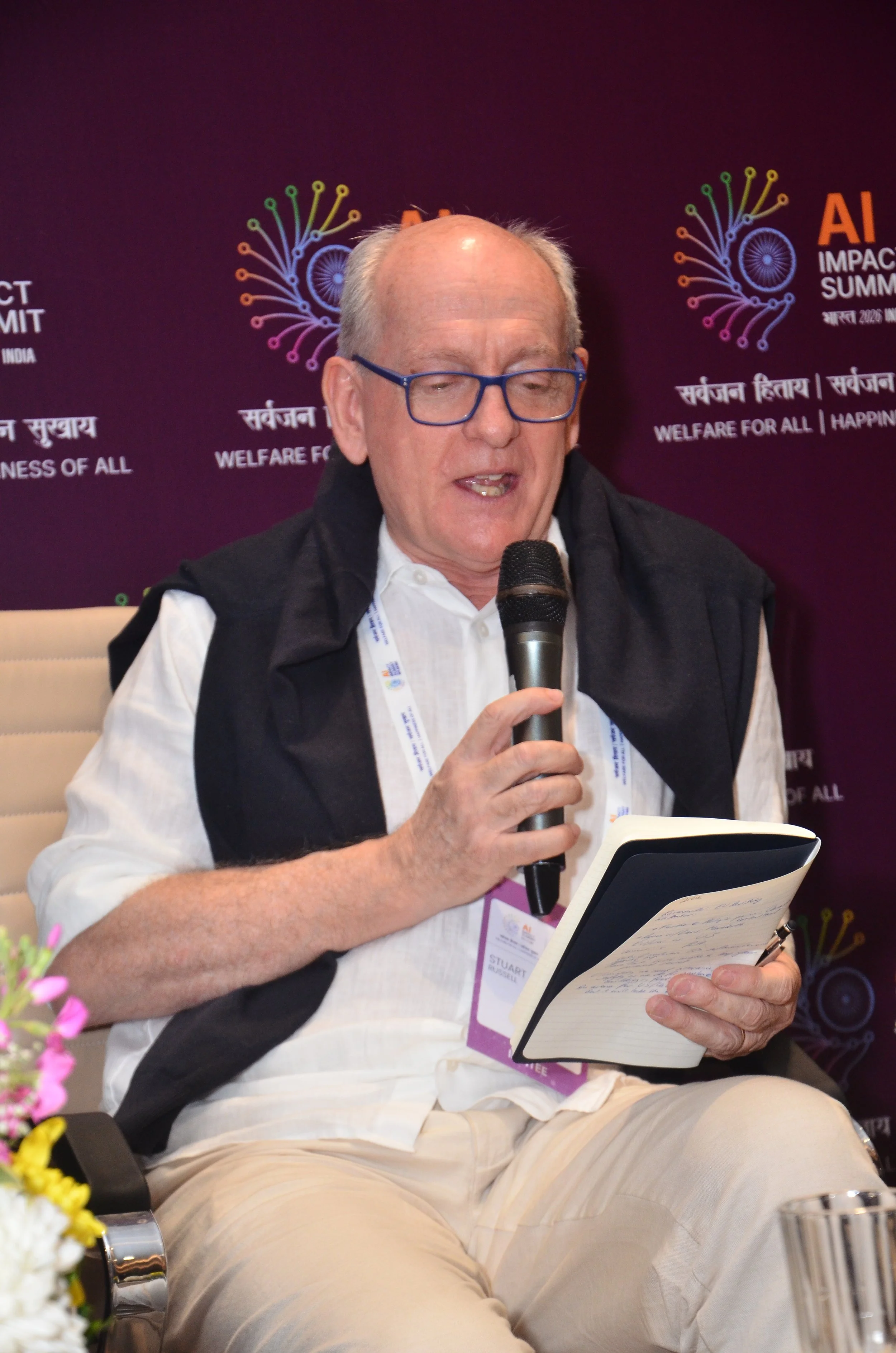

Stuart J. Russell

Distinguished Professor of Computer Science, UC Berkeley

Adam Gleeve

CEO, FAR.AI

Robert Trager

Co-director, Oxford Martin AI Governance Initiative

Duncan-Cass Beggs

Executive Director, Global AI Risk Initiative, CIGI

Key Takeaways

1. Secretary S. Krishnan announced that India is Backing Hardware Sovereignty with Real Money and Policy India launched Semiconductor Mission 2.0, Micron's fab is going live (India's first commercial chip production), AI compute is subsidized at ₹65/GPU, roughly ¼ of global rates and the Budget now incentivizes global data centers to base in India. The government is making a serious, long-term bet on hardware-led AI sovereignty.

2. True Sovereignty is About Verifiable Trust, Not Ownership No country, not even the US owns its full AI stack. Stuart Russell argued that chasing ownership is a dead end; what matters is formal verification cryptographically provable assurances that systems do what they claim. This reframes sovereignty from a geopolitical aspiration to a technical engineering problem.

3. Hardware is the Only Realistic Governance Enforcement Layer Software can be written by anyone, anywhere you can't regulate it at scale. But hardware flows through a handful of chokepoints globally. Embedding governance at the chip level via Trusted Execution Environments and proof-carrying code makes compliance enforceable and extremely hard to circumvent.

4. Verification Technology is Ready Now - Lucid's Demo Proved ItLucid Computing demonstrated a deployable platform where an AI agent can be spun up with cryptographic proofs of data localization, PII compliance, sovereignty, and an AI Passport all verifiable independently by any third party. The gap between governance theory and working technology is closing fast.

5. Without Verification, International AI Coordination Will Fail Both Trager and Russell warned that geopolitical racing dynamics will cause safety corners to be cut exactly like historical arms races. Verification is the only mechanism that makes international agreements credible. The window to build this infrastructure is now, before a critical political moment passes like the Comprehensive Test Ban Treaty did.